The Interplay of Technology, Ethics, and Policy

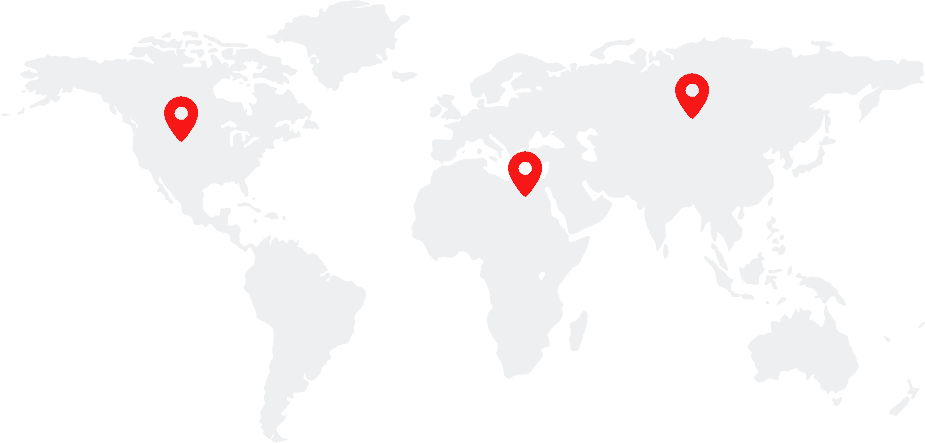

Public Engagement Building (PEB) 721 9625 Scholars Drive North MC 0305, La Jolla, CA, United StatesAbstract: Technology is often designed and deployed without critical reflection of the values that it embodies. Value trade-offs—between security and privacy, free speech and dignity, autonomy and human agency, and different conceptions of fairness—abound in many technologies that are now achieving great scale in commonly used tech platforms. The decisions made by the people inside […]