Contact Us

Give us a call or drop by anytime, we endeavor to answer all inquiries within 24 hours.

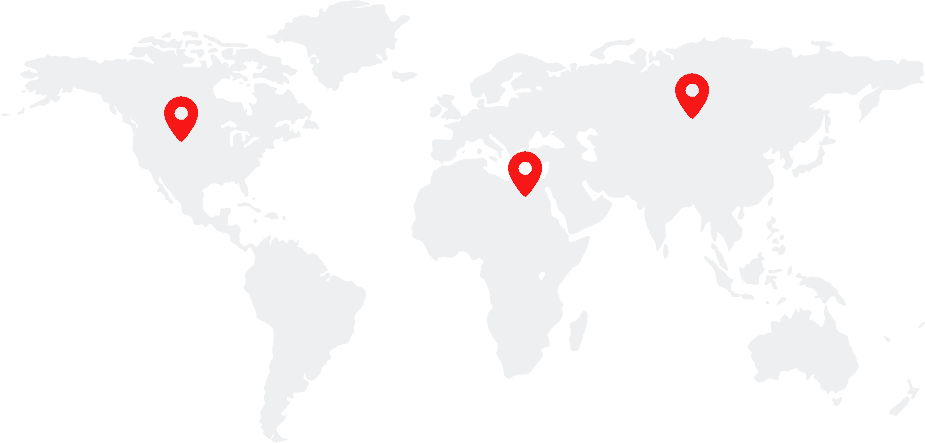

Find us

PO Box 16122 Collins Street West Victoria, Australia

Email us

info@domain.com / example@domain.com

Phone support

Phone: + (066) 0760 0260 / + (057) 0760 0560

- This event has passed.

Domain Counterfactuals for Trustworthy ML via Sparse Interventions | David I. Inouye

Talk Abstract:

Although incorporating causal concepts into deep learning shows promise for increasing explainability, fairness, and robustness, existing methods require unrealistic assumptions and aim to recover the full latent causal model. This talk proposes an alternative: domain counterfactuals. Domain counterfactuals ask a more concrete question: “What would a sample look like if it had been generated in a different domain (or environment)?” This avoids the challenges of full causal recovery while answering an important causal query. I will theoretically analyze the domain counterfactual problem for invertible causal models and prove an estimation bound that depends on the sparsity of intervention, i.e., the number of intervened causal variables. Leveraging this theory, I will introduce a practical counterfactual estimation algorithm that outperforms baselines. Additionally, I will showcase the potential of domain counterfactuals for counterfactual fairness and domain generalization through preliminary results. Finally, I will connect this work to my broader research focus on distribution matching, highlighting its potential as a foundational tool for building trustworthy machine learning systems.

Bio:

Prof. David I. Inouye is an assistant professor in the Elmore Family School of Electrical and Computer Engineering at Purdue University. His lab focuses on trustworthy machine learning (ML), which aims to make ML systems more robust, causal and explainable. Currently, he is interested in advancing distribution matching algorithms and applications such as causality, domain generalization, and distribution shift explanations. He is also interested in highly robust distributed learning algorithms on a network of devices, called Internet Learning. His research is funded by ARL, ONR, and NSF. Previously, he was a postdoc at Carnegie Mellon University working with Prof. Pradeep Ravikumar. He completed his Computer Science PhD at The University of Texas at Austin in 2017 advised by Prof. Inderjit Dhillon and Prof. Pradeep Ravikumar. He was awarded the NSF Graduate Research Fellowship (NSF GRFP).